About me

I am a math student and programming enthusiast constantly seeking for improvement.

github.com/ldionne

Overview

- The target: lock order inconsistencies

- Existing solutions

d2: a library-based approach- Design and usage

- Algorithm

- Roadmap

- Towards

dyno: a dynamic analysis library

Lock order inconsistencies

Example #1

mutex A, B;

thread t1([&] {

scoped_lock a(A);

scoped_lock b(B);

});

thread t2([&] {

scoped_lock b(B);

scoped_lock a(A);

});Example #2

mutex A, B, C;

thread t1([&] {

scoped_lock a(A);

scoped_lock b(B);

});

thread t2([&] {

scoped_lock b(B);

scoped_lock c(C);

});

thread t3([&] {

scoped_lock c(C);

scoped_lock a(A);

});Same principle but harder to catch

Very few variations in thread scheduling usually happens for different runs of the same code in the same conditions. For this reason, odds are that rare deadlocks still make it to production and only happen under "extreme" conditions.

Why are they so vicious?

- Non deterministic

- Often uncaught by unit tests

- Difficult to reproduce

We would like to detect them automatically and before they happen

Existing solutions

Never hold more than one lock at once

- Not realistic for non-trivial programs

Determine a hierarchy among locks and respect it

- Hard to enforce for non-trivial programs

Disturb thread scheduling to provoke hidden deadlocks

- Requires several runs of the program

- Good idea that could be mixed with other approaches

Use an algorithm to break deadlocks when they happen

- Overhead required to check for deadlock conditions

- Policy for breaking deadlocks can't be pretty: kill the thread

- Misses the point: deadlocks are a bug, not a runtime mishap

Intel® Inspector XE

- Detects deadlocks involving up to 4 threads only

- Huge overhead

- Proprietary and costly license

- Exact capabilities for deadlock detection unknown

Valgrind (Helgrind)

- Runs the program on a virtual processor, one thread at a time

- Limited to POSIX pthreads threading primitives

d2: a library-based approach

Goals

- Detect lock order inconsistencies between N threads

- Support custom locks and threads easily

- Low overhead when enabled, none when disabled

- Very few false positives

Why intrusive?

Easier to implement

Design and usage

d2 needs to record 4 types of events

- Lock acquires and releases

- Thread starts and joins

High level API with concepts from Boost.Thread

boost::BasicLockable

boost::Lockable

boost::TimedLockableSimply wrap your class with the corresponding wrapper

class untracked_mutex {

public:

void lock();

void unlock();

};

typedef d2::basic_lockable<untracked_mutex> mutex;All wrappers have a recursive counterpart

d2::recursive_basic_lockable

d2::recursive_lockable

d2::recursive_timed_lockableYou can also bypass the concept based API

class mutex

: d2::trackable_sync_object<d2::non_recursive>

{

public:

void some_method_to_lock() {

// normal code

this->notify_lock();

}

void some_method_to_unlock() {

// normal code

this->notify_unlock();

}

};Tracking standard conforming threads is easy

class untracked_thread {

// ...

};

typedef d2::standard_thread<untracked_thread> thread;Tracking non standard thread implementations is possible too

class thread : d2::trackable_thread<thread> {

public:

template <typename F, typename ...Args>

void some_method_to_start(F&& f, Args&& ...args) {

typedef d2::thread_function<F> F_;

F_ f_ = this->get_thread_function(f);

// normal code using F_ and f_

}

};Tracking non standard thread implementations is possible too

class thread : d2::trackable_thread<thread> {

public:

void some_method_to_join() {

// normal code

this->notify_join();

}

};Tracking non standard thread implementations is possible too

class thread : d2::trackable_thread<thread> {

public:

void some_method_to_detach() {

// normal code

this->notify_detach();

}

};Don't forget to modify these

thread(thread&& other);

thread& operator=(thread&& other);

friend void swap(thread& a, thread& b);Low level API (for eventual bindings)

namespace d2 { namespace core {

notify_acquire(std::size_t thread, std::size_t lock);

notify_release(std::size_t thread, std::size_t lock);

notify_recursive_acquire(std::size_t thread, std::size_t lock);

notify_recursive_release(std::size_t thread, std::size_t lock);

notify_start(std::size_t parent, std::size_t child);

notify_join(std::size_t parent, std::size_t child);

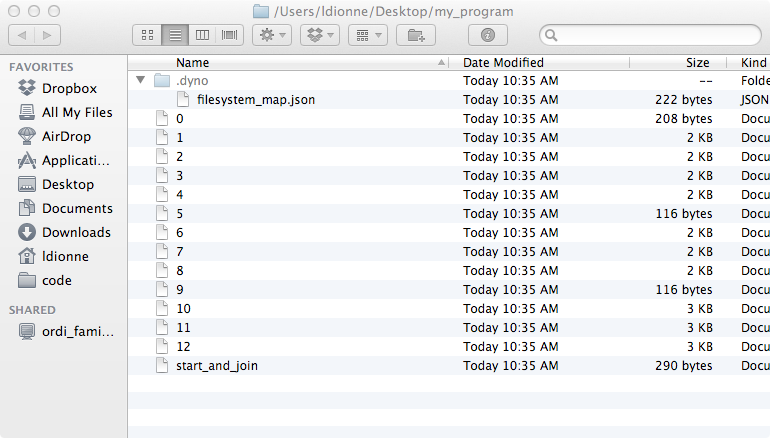

}}Events are generated and dispatched to the filesystem

Mumbo jumbo on disk

$ cat my_program/1 # thread 1

22 serialization::archive 10 0 0 4 0 0 0 0 1 0 0 2 0 0 0 0 0 1 0 0 2 0 0 0 0 0 0 0 1 3 0 0 0 1 4 0 0 0 1 5 0 0 0 1 6 0 0 0 1 7 0 0 0 1 8 0 0 0 1 9 0 0 0 1 10 0 0 0 1 11 0 0 0 1 12 0 0 0 1 13 0 0 0 1 14 0 0 0 1 15 0 0 0 1 16 0 0 0 1 17 0 0 0 1 18 0 0 0 1 19 0 0 0 1 20 0 0 0 1 21 0 0 0 1 22 0 0 0 1 23 0 0 0 1 24 0 0 0 1 25 0 0 0 1 26 0 0 0 1 27 0 0 0 1 28 0 0 0 1 29 0 0 0 1 30 0 0 0 1 31 0 0 0 1 32 0 0 0 1 33 0 0 0 1 34 0 0 0 1 35 0 0 0 1 36 0 0 0 1 37 0 0 0 1 38 0 0 0 1 39 0 0 0 1 40 0 0 0 1 41 0 0 0 1 42 0 0 0 1 43 0 0 0 1 44 0 0 0 1 45 0 0 0 1 46 0 0 0 1 47 0 0 0 1 48 0 0 0 1 49 0 0 0 1 50 0 0 0 1 51 0 0 0 1 52 0 0 0 1 53 0 0 0 1 54 0 0 0 1 55 0 0 0 1 56 0 0 0 1 57 0 0 0 1 58 0 0 0 1 59 0 0 0 1 60 0 0 0 1 61 0 0 0 1 62 0 0 0 1 63 0 0 0 1 64 0 0 0 1 65 0 0 0 1 66 0 0 0 1 67 0 0 0 1 68 0 0 0 1 69 0 0 0 1 70 0 0 0 1 71 0 0 0 1 72 0 0 0 1 73 0 0 0 1 74 0 0 0 1 75 0 0 0 1 76 0 0 0 1 77 0 0 0 1 78 0 0 0 1 79 0 0 0 1 80 0 0 0 1 81 0 0 0 1 82 0 0 0 1 83 0 0 0 1 84 0 0 0 1 85 0 0 0 1 86 0 0 0 1 87 0 0 0 1 88 0 0 0 1 89 0 0 0 1 90 0 0 0 1 91 0 0 0 1 92 0 0 0 1 93 0 0 0 1 94 0 0 0 1 95 0 0 0 1 96 0 0 0 1 97 0 0 0 1 98 0 0 0 1 99 0 0 0 1 100 0 0 0 1 101 0 0 1 0 0 1 101 0 0 1 1 100 0 0 1 1 99 0 0 1 1 98 0 0 1 1 97 0 0 1 1 96 0 0 1 1 95 0 0 1 1 94 0 0 1 1 93 0 0 1 1 92 0 0 1 1 91 0 0 1 1 90 0 0 1 1 89 0 0 1 1 88 0 0 1 1 87 0 0 1 1 86 0 0 1 1 85 0 0 1 1 84 0 0 1 1 83 0 0 1 1 82 0 0 1 1 81 0 0 1 1 80 0 0 1 1 79 0 0 1 1 78 0 0 1 1 77 0 0 1 1 76 0 0 1 1 75 0 0 1 1 74 0 0 1 1 73 0 0 1 1 72 0 0 1 1 71 0 0 1 1 70 0 0 1 1 69 0 0 1 1 68 0 0 1 1 67 0 0 1 1 66 0 0 1 1 65 0 0 1 1 64 0 0 1 1 63 0 0 1 1 62 0 0 1 1 61 0 0 1 1 60 0 0 1 1 59 0 0 1 1 58 0 0 1 1 57 0 0 1 1 56 0 0 1 1 55 0 0 1 1 54 0 0 1 1 53 0 0 1 1 52 0 0 1 1 51 0 0 1 1 50 0 0 1 1 49 0 0 1 1 48 0 0 1 1 47 0 0 1 1 46 0 0 1 1 45 0 0 1 1 44 0 0 1 1 43 0 0 1 1 42 0 0 1 1 41 0 0 1 1 40 0 0 1 1 39 0 0 1 1 38 0 0 1 1 37 0 0 1 1 36 0 0 1 1 35 0 0 1 1 34 0 0 1 1 33 0 0 1 1 32 0 0 1 1 31 0 0 1 1 30 0 0 1 1 29 0 0 1 1 28 0 0 1 1 27 0 0 1 1 26 0 0 1 1 25 0 0 1 1 24 0 0 1 1 23 0 0 1 1 22 0 0 1 1 21 0 0 1 1 20 0 0 1 1 19 0 0 1 1 18 0 0 1 1 17 0 0 1 1 16 0 0 1 1 15 0 0 1 1 14 0 0 1 1 13 0 0 1 1 12 0 0 1 1 11 0 0 1 1 10 0 0 1 1 9 0 0 1 1 8 0 0 1 1 7 0 0 1 1 6 0 0 1 1 5 0 0 1 1 4 0 0 1 1 3 0 0 1 1 2 0 0

d2tool loads the events, constructs the graphs and performs the analysis. Also mention that this output was cropped and edited a bit for clarity.

d2tool speaks that mumbo jumbo

$ d2tool --analyze myprogram

in thread #X started at [location]:

holds object #Y acquired at [location]

holds object #Z acquired at [location]

...

tries to acquire object #W at [location]

in thread #XX started at [location]:

holds object #YY acquired at [location]

holds object #ZZ acquired at [location]

...

tries to acquire object #WW at [location]

where each [location] is a complete call stack:

$ d2tool --analyze myprogram

in thread #2 started at [no location information]:

holds object #1 acquired at

[...]/scenario_ABBA main::$_1::operator()() const

[...]/scenario_ABBA boost::detail::function::void_function_obj_invoker0<main::$_1, void>::invoke(boost::detail::function::function_buffer&)

[...]/scenario_ABBA boost::function0<void>::operator()() const

[...]/scenario_ABBA d2mock::thread::impl::impl(boost::function<void ()> const&)::'lambda'()::operator()() const

[...]/scenario_ABBA d2::thread_function<d2mock::thread::impl::impl(boost::function<void ()> const&)::'lambda'()>::result<d2::thread_function<d2mock::thread::impl::impl(boost::function<void ()> const&)::'lambda'()> ()>::type d2::thread_function<d2mock::thread::impl::impl(boost::function<void ()> const&)::'lambda'()>::operator()<>()

[...]/scenario_ABBA boost::detail::thread_data<d2::thread_function<d2mock::thread::impl::impl(boost::function<void ()> const&)::'lambda'()> >::run()

[...]/libboost_thread-mt.dylib thread_proxy

[...]/libsystem_c.dylib _pthread_start

[...]/libsystem_c.dylib thread_start

Current limitations:

- Output is not as nicely formatted

- Thread starts have no location information

The algorithm

Disclaimer

I am not the author of the algorithm. It is presented in:

"Detection of deadlock potentials in multithreaded programs"

IBM Journal of Research and Development, vol.54, no.5, pp.3:1,3:15

Sept.-Oct. 2010

A note for the rest of the presentation:

For brevity, unlocking mutexes and joining threads will often be omitted.

When omitted, assume the mutexes are unlocked in reverse order of locking

and threads are joined in reverse order of starting.

So these are the same:

mutex A, B;

A.lock();

B.lock();

B.unlock();

A.unlock();and

mutex A, B;

A.lock();

B.lock();And so are these:

thread t1([] {});

thread t2([] {});

t2.join();

t1.join();and

thread t1([] {});

thread t2([] {});Specify that we'll be adding annotation on the graph to encode more information like segmentation and gatelocks. Precise that we'll start with the most basic graph and add annotations as we go to improve the algorithm.

The basic idea is to build a graph where:

- Vertices represent synchronization objects

- An edge from

utovmeans that a thread acquiredvwhile holdingu - A cycle in the graph represents a potential deadlock

Two nodes are created because we created two locks, but no edges are created because we did not lock anything.

Example #1

mutex A, B;

Example #1

No edge is created; the main thread does not hold anything when it acquires A.

mutex A, B;

A.lock();

Example #1

The main thread holds A when it acquires B; we add an edge from A to B.

mutex A, B;

A.lock();

B.lock();

Example #1

The graph is not modified on releases.

mutex A, B;

A.lock();

B.lock();

B.unlock();

A.unlock();

Since there is already an edge from A to B in the graph, we don't add it redundantly if a thread locks B again while holding A.

Example #1

We don't add redundant edges.

mutex A, B;

A.lock();

B.lock();

B.unlock();

A.unlock();

A.lock();

B.lock();

Example #2

mutex A, B, C, D;

A.lock();

B.lock();

Example #2

We're really computing the transitive closure of the "is held by a thread when acquiring X" relation.

mutex A, B, C, D;

A.lock();

B.lock();

C.lock();

Example #2

We're really computing the transitive closure of the "is held by a thread when acquiring X" relation.

mutex A, B, C, D;

A.lock();

B.lock();

C.lock();

D.lock();

Example #3: A potential deadlock

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

// ...

Clearly, there is a cycle in the graph iff two locks were acquired in some order and then acquired in a different order.

Example #3: A potential deadlock

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

thread t2([&] {

B.lock();

A.lock();

});

Example # 4: Another potential deadlock

mutex A, B, C, D;

thread t1([&]{

A.lock();

B.lock();

C.lock();

D.lock();

});

// ...

Example #4: Another potential deadlock

mutex A, B, C, D;

thread t1([&]{

A.lock();

B.lock();

C.lock();

D.lock();

});

thread t2([&]{

D.lock();

B.lock();

});

Example #5: It works for an arbitrary number of threads

mutex A, B, C;

thread t1([&] {

A.lock();

B.lock();

});

thread t2([&] {

B.lock();

C.lock();

});

thread t3([&] {

C.lock();

A.lock();

});

Since there is only one thread involved, a deadlock can't possibly happen. Actually, the generalization is that all the code in this thread is implicitly serialized (because it is run in a single thread of execution). Therefore, there exists an implicit happens-before relationship between the statements. Note that a deadlock could still happen if a non-recursive lock was locked recursively by a thread. However, whether such a deadlock happens depends on whether the code path leading to it is taken. In other words, it is deterministic as far as thread scheduling is concerned. If the code path is taken, the deadlock is 100% to happen. Otherwise, the deadlock won't happen and we won't detect anything anyway since the code path was not taken (and we're doing dynamic analysis).

However, the algorithm can report false positives. Consider:

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

B.unlock();

A.unlock();

B.lock();

A.lock();

A.unlock();

B.unlock();

});

If a cycle contains two edges labelled with the same thread, it is ignored because the represented deadlock would require code in the same thread to run concurrently, which is impossible. This is effectively a special case of the happens-before relationship.

Let's augment the lock graph by labelling each edge with the thread that caused that edge to be added.

We will ignore cycles containing two edges labelled with the same thread.

Example #6

No labels, no edges, like the basic graph.

mutex A, B;

// ...

Example #6

When an edge is added, we label it with the thread that caused its addition.

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

// ...

Example #6

We add a parallel edge if the label on it is different from that of existing edges.

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

thread t2([&] {

A.lock();

B.lock();

});

Consider the previous graph with a false positive. We now ignore the single threaded cycle:

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

B.unlock();

A.unlock();

B.lock();

A.lock();

});

The A->B->C cycle with three different threads is a real potential deadlock. The other A->B->C with t1, t2, t2 is a false positive and is ignore because t2 appears twice in the cycle.

And cycles still represent potential deadlocks.

mutex A, B, C;

thread t1([&] {

A.lock();

B.lock();

});

thread t2([&] {

B.lock();

C.lock();

C.unlock();

B.unlock();

C.lock();

A.lock();

});

thread t3([&] {

C.lock();

A.lock();

});

The deadlock may never happen because G has to be held by both threads in order to enter the dangerous section of the code.

However, consider this situation. Both threads must be holding G, which

is impossible:

mutex A, B, G;

thread t1([&] {

G.lock();

A.lock();

B.lock();

});

thread t2([&] {

G.lock();

B.lock();

A.lock();

});

We say that G is a 'gatelock' protecting that cycle

This time, we will augment the edge labels to record the set of locks held by the thread causing an edge to be added to the lock graph.

A cycle is not valid if the gatelock sets of any two edges in the cycle intersect, i.e. if they share one or more gatelocks.

Example #7

mutex A, B, C, D;

// ...

Example #7

t1 acquires A while holding nothing

mutex A, B, C, D;

thread t1([&] {

A.lock();

// ...

});

// ...

It is NOT redundant to put A in the set of gatelocks, as can be seen

in the next slide.

Example #7

t1 acquires B while holding A; A is put in the gatelocks for that edge

mutex A, B, C, D;

thread t1([&] {

A.lock();

B.lock();

// ...

});

// ...

Here, we can see that putting B in the set of gatelocks when

acquiring C is not redundant, because the edge from A to C needs it.

Example #7

t1 acquires C while holding A and B; all the edges that are added to

the graph are marked with these gatelocks

mutex A, B, C, D;

thread t1([&] {

A.lock();

B.lock();

C.lock();

});

// ...

Example #7

t2 acquires D while holding nothing

mutex A, B, C, D;

thread t1([&] {

A.lock();

B.lock();

C.lock();

});

thread t2([&] {

D.lock();

// ...

});

Example #7

t2 acquires A while holding D

mutex A, B, C, D;

thread t1([&] {

A.lock();

B.lock();

C.lock();

});

thread t2([&] {

D.lock();

A.lock();

// ...

});

Example #7

t2 acquires B while holding D and A

mutex A, B, C, D;

thread t1([&] {

A.lock();

B.lock();

C.lock();

});

thread t2([&] {

D.lock();

A.lock();

B.lock();

});

Now consider the previous graph with a false positive:

As expected, the false positive is inhibited by the intersecting sets of gatelocks.

However, consider this situation, where t1 and t2 will never run in parallel:

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

t1.join();

thread t2([&] {

B.lock();

A.lock();

});

t2.join();

We say that t1 'happens-before' t2.

The happens-before relation

We can implement this relation by associating an identifier to segments of the code that are separated by the start or join of a thread.

When a thread starts another thread, both the parent and the child threads are assigned new segment identifiers.

When a thread joins another thread, the parent thread continues executing with a new segment identifier.

By drawing directed edges between the segments, we end up with a graph where

node v is reachable from node u iff u happens before v.

If two acquires do not happen before the other, then they must surely happen in parallel.

Example #8

main starts in segment 0

// ...

Example #8

main starts t1; main and t1 get new segments

thread t1([] {});

// ...

Example #8

main starts t2; main and t2 get new segments

thread t1([] {});

thread t2([] {

// ...

});

// ...

Example #8

t2 starts t3; t2 and t3 get new segments

thread t1([] {});

thread t2([] {

thread t3([] {});

// ...

});

// ...

Example #8

t2 joins t3; t2 continues in a new segment

thread t1([] {});

thread t2([] {

thread t3([] {});

t3.join();

});

// ...

Example #8

main joins t1; main continues in a new segment

thread t1([] {});

thread t2([] {

thread t3([] {});

t3.join();

});

t1.join();

// ...

Example #8

main joins t2; main continues in a new segment

thread t1([] {});

thread t2([] {

thread t3([] {});

t3.join();

});

t1.join();

t2.join();

Let's augment the lock graph by recording the segment in which an acquire is made.

A cycle is not valid if any edge in the cycle happens before another edge in the cycle.

Let's go back to our false positive:

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

t1.join();

thread t2([&] {

B.lock();

A.lock();

});main starts in segment 0

mutex A, B;

main starts t1; main and t1 get new segments

mutex A, B;

thread t1([&] {

// ...

});

t1 acquires A and then B, both in segment 2

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

main joins t1; main continues in a new segment

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

t1.join();

main starts t2; main and t2 get new segments

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

t1.join();

thread t2([&] {

// ...

});

t2 locks B and then A, both in segment 4

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

t1.join();

thread t2([&] {

B.lock();

A.lock();

});

main joins t2; main continues in a new segment

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

});

t1.join();

thread t2([&] {

B.lock();

A.lock();

});

t2.join();

The cycle will be ignored because segment 2 happens before segment 4.

Summary of the algorithm

The lock graph is a directed multigraph

- Vertices represent synchronization objects

- An edge from

utovmeans that a thread acquiredvwhile holdingu - A cycle in the graph represents a potential deadlock

The segmentation graph is a directed acyclic graph

- Vertices represent segments of code separated by

starts andjoins - A path from

utovmeans thatuhappens beforev

We label each edge of the lock graph with

- The thread that performed the acquire

- The set of locks held by the thread ("gatelocks")

- The code segment in which the acquire is performed

To reduce false positives, we ignore a cycle if...

Any two edges are in the same thread

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

B.unlock();

A.unlock();

B.lock();

A.lock();

A.unlock();

B.unlock();

});Any two edges share common "gatelocks"

mutex A, B, G;

thread t1([&] {

G.lock();

A.lock();

B.lock();

B.unlock();

A.unlock();

G.unlock();

});

thread t2([&] {

G.lock();

B.lock();

A.lock();

A.unlock();

B.unlock();

G.unlock();

});Any edge happens before any other edge in the cycle

mutex A, B;

thread t1([&] {

A.lock();

B.lock();

B.unlock();

A.unlock();

});

t1.join();

thread t2([&] {

B.lock();

A.lock();

A.unlock();

B.unlock();

});Limitations and drawbacks

- Requires modifying existing code

- No integration with an IDE

Roadmap

- Support a wider set of synchronization primitives

- Provide integration with more libraries

- Further reduce false positives (I have some ideas)

dyno: a dynamic analysis library

This is very experimental, so we won't go in depth

Idea

- Events are generated by a program and recorded

- Custom actions can be bound to these events

- Events can be loaded conveniently to analyze a program trace

First, define an event

namespace tags {

struct acquire;

struct lock_id;

}

typedef event<tags::acquire,

records<call_stack>,

records<thread_id>,

records<

custom_info<tags::lock_id, unsigned>

>

> acquire_event;Then, bundle the events into a framework

typedef framework<

events<

acquire_event, release_event,

start_event, join_event

>,

backend<save_on_filesystem>

> d2_framework_t;

static d2_framework_t d2_framework;Bind actions to events as wanted

dyno::on<tags::acquire>(d2_framework,

[](acquire_event e) {

// whatever

});Generate events in your code

struct mutex {

void lock() {

dyno::generate<tags::acquire>(d2_framework, lock_id_);

}

private:

unsigned lock_id_;

};Load events from a source to perform your custom analysis without hassle

struct populate_lock_graph {

void operator()(acquire_event e) const;

void operator()(release_event e) const;

template <typename AnyOtherEvent>

void operator()(AnyOtherEvent) const;

};

dyno::load_events("some_directory", populate_lock_graph());Explain how the project was started by witnessing that much of the code from d2 could be generally useful for dynamic analysis.

Use cases

- Simplifying the implementation of

d2 - Benchmarking and checking memory allocations

- Gathering statistics during program execution